The Terminator film franchise recently released its latest offering on Blu-ray, with the James Cameron produced Terminator: Dark Fate. Finally, he delivered a sequel to the epic, sci-fi opus Terminator 2, almost three decades later, in which there is no fate but what we make, and the fans couldn’t be more excited. And by excited I mean kind of indifferent.

Despite Cameron’s involvement with the movie and a respectable Rotten Tomatoes score (70% as of this writing), filmgoers didn’t show up in numbers that warrant immediately green-lighting any more sequels, and you can understand why. We see Arnold as a good guy, again, for the fourth time, protecting the main protagonist, for the fourth time, against a liquid metal terminator, for the third time, and using a fuel cell to finish off the bad guy, for the second time. Plus his name is Carl, he owns a drapery business and he grew a beard. That’s just dumb.

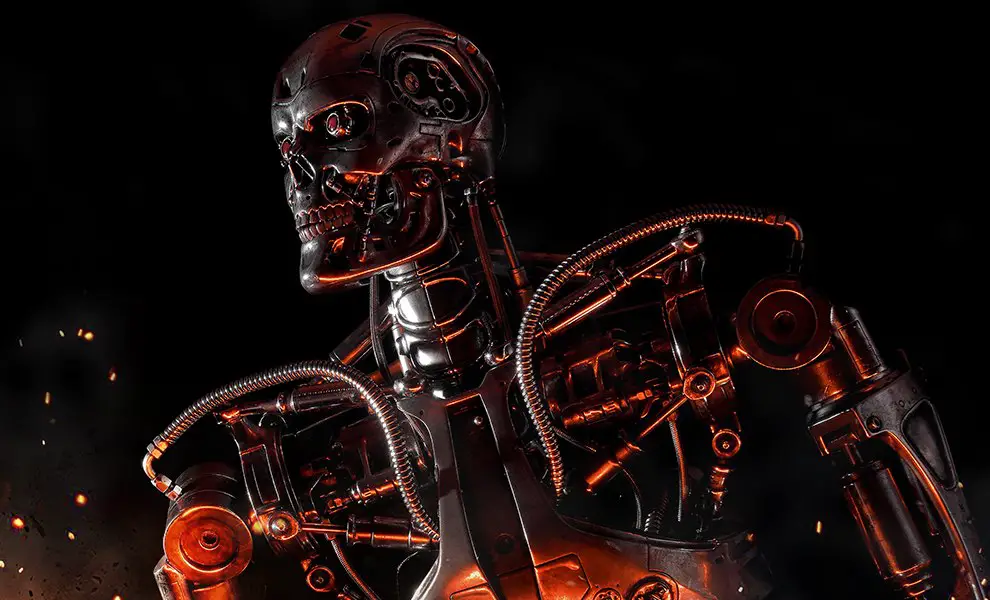

I remember watching the first Terminator film when I was in elementary school, at the precipice of my innocence. I was mesmerized by a musclebound metal man from the future ruthlessly pursuing its victim. I was also scared sh*tless. The character was menacing. He was Jason Voorhees, except his mask was human flesh. I had nightmares of a robot with glowing red eyes coming for me for months.

Then I grew up and realized the plot of the film involved assassinating someone who would go on to affect mankind for the greater good. Phew! I never had anything to worry about after all.

I did however continue to wonder if that level of technology was actually possible. Could humanity someday create a device of its own destruction? Can artificial intelligence (AI) become so advanced that it decides to bypass its creators? And what ever happened to Stan Morsky and his Porsche?

Your first reaction might be that it’s just a movie and AI taking over is just a science fiction trope. Then again, Elon Musk called AI our biggest existential threat. Stephen Hawking worried that AI could spell the end of the human race. China plans to be the global leader in AI by 2030, and Vladimir Putin said the one to be the leader in this sphere will be the ruler of the world. Mark Cuban believes it’s the future of warfare. Again, that’s Mark Cuban, AI expert.

Existential risk from artificial general intelligence is the hypothesis that substantial progress in this technology could someday result in human extinction. When AI surpasses humanity in general intelligence we approach a technological singularity, and civilization is irreversibly altered. This could be spurred by a software-based AI repeatedly upgrading itself at an increasing rate.

In July of 2018, Future of Life, an organization that researches the benefits and risks of artificial intelligence, introduced a pledge to curb unethical autonomous weapon proliferation. In all, 247 organizations and 3,253 individuals — including Elon Musk, MIT physics professor Max Tegmark, and all three of the co-founders of Google’s DeepMind neural network project — committed to not develop, manufacture, or use killer robots.

This is a lot of speculation and preventative bullsh*t for something that’s never going to happen.

When interviewed about his book Wired for War, American political scientist and specialist on 21st century warfare, PW Singer, told Gizmodo that the preconditions for a successful Terminator-type uprising are not in place. Such a thing would require:

- The AI or robot having some sense of self-preservation and ambition for power, or a fear of loss of power. “We’re building robots specifically to go off and get killed,” Singer said. “No one is building them to have a survival instinct — they’re actually building them to have the exact opposite.”

- The robots to have eliminated any dependence on humans. “The Global Hawk drone may be able to take off on its own, fly on its own, but it still needs someone to put that gasoline in there,” Singer said. How would Skynet power its Terminator factory, solar panels during a nuclear winter?

- Humans to have omitted fail-safe controls, so there’s no ability to turn the AI off. I mean, even the original NES had a reset button, right?

- The robots need to gain these advantages in a way that takes humans by surprise. “You don’t get super-intelligent robots without first having semi-super-intelligent robots, and so on,” Singer said. “At each one of these stages, someone would push back.”

He’s not alone in this line of thinking. Inventor of the PalmPilot and founder of the machine intelligence company Numenta, Jeff Hawkins, wrote for Vox that “intelligent machines would not have the ability to self-replicate in nature unless we go to extreme lengths to give them this capability, and currently we don’t know how to do that.” Hawkins also doesn’t believe machines will develop human-like desires:

The neocortex is a learning system; it learns a model of the world and how everything in the world behaves, but on its own it is emotionless. The other parts of the brain, such as the spinal cord, the brain stem and the basal ganglia, are older in evolutionary time. These older brain parts are responsible for instinctive behaviors and emotions, such as hunger, anger, lust and greed.

Or, as I interpret it, it’s as likely for a Terminator to develop a sense of self-preservation as it is to get a robo-boner.

Hawkins doesn’t put much stock in the “intelligence explosion” idea, either. “There will be no explosion, no singularity. Intelligence is a product of learning,”, he said. “A brain, no matter how big or how fast, does not become intelligent until it learns. Learning is a slow process requiring practice, repetition, and study over many years.”

So is AI something humanity should fear or embrace? Seems to depend on who you ask. In the same Vox article Hawkins said, “History tells us that the ultimate impact of a new technology is nearly impossible to predict. In the 1940s, no one envisioned the smartphone, the Internet or GPS satellites.” If AI does produce an inevitable doomsday scenario that will eventually come for us all, no one can say when or how. And because of that I remain skeptical.

Nevertheless, I signed that Future of Life pledge. Maybe I did do something for the greater good of humanity, after all. I’ll sleep well and keep waiting for the red-eyed harbinger of death to burst through my bedroom door.

Every February, to help celebrate Darwin Day, the Science section of AIPT cranks up the critical thinking for SKEPTICISM MONTH! Skepticism is an approach to evaluating claims that emphasizes evidence and applies the tools of science. All month we’ll be highlighting skepticism in pop culture and skepticism of pop culture.

AIPT Science is co-presented by AIPT and the New York City Skeptics.

Join the AIPT Patreon

Want to take our relationship to the next level? Become a patron today to gain access to exclusive perks, such as:

- ❌ Remove all ads on the website

- 💬 Join our Discord community, where we chat about the latest news and releases from everything we cover on AIPT

- 📗 Access to our monthly book club

- 📦 Get a physical trade paperback shipped to you every month

- 💥 And more!

You must be logged in to post a comment.